Tagged: blanklabel

Load testing checkout/sales cart process with loader.io

While its somewhat of a low-priority currently; the developers at BlankLabel have been trying to figure out where and why the worker-process jammed the other day when I hit it with 50,000 requests; and visual studio and their load testing tools will only let them loadtest unit tests to a point… after that you need to bring in a cloud based solution like loader.io from sendgrid to hit you till you pass out.

Most programmers do not think about high availability or how the code will perform under massive loads and many think that its the hardware’s responsibility to make sure that the software is highly available and that its the hardware that should make the application scale and perform but this is just plain wrong. High availability, scalability and performance start at the coding level, when people write code that is scalable the cost for hardware to cover up the problem goes down and at some point, no amount of hardware will save you from bad code that will bottleneck’s you in someway or the other.

Code may behave properly when simple unit tests are run at the pre/post checkin and build phase(s); code may even behave when the QA team hits it with their testing and some in-house load tests but many do not test for high volume/hit routinely because of the effort involved in getting the test’s setup. Lets say you currently have a well functioning checkout process with a simple flow

User login/info -> Product Cart Selection -> Checkout

A new feature requires that the users last 5 orders are loaded into a session; but for some reason the developer decides to load the entire order history data into the session when a user logs in and unknowingly introducing a defect that depending on the order history and number of active sessions, it could cause the worker-process to crash (we wont argue about in-proc session storage here); however this makes it through unit and QA testing and this leads to a longer checkout process, in some cases a loss of session data, or an error; eventually through bug reports / customer support the issue would have been identified and yes it would have been fixed; but this could have been caught by load testing your critical points of success (or failure) like the checkout, signup or login process.

The setup I created for the developers is a bit complicated but to help explain the concept for this post, using BlankLabel as the test subject, I exposed 3 basic web/call points, LoadLogin, LoadCart and LoadCheckout.

LoadLogin uses the user object and uses the data that is passed to simulate a login for the test user using the existing code.

LoadCart uses the Item object and populates a Cart with the data that is passed to simulate a user adding items to a cart.

LoadCheckout uses the Process Order methods to simulate a checkout and sends out an email with the order details (data captured in LoadLogin and LoadCart)

Most do mock test the above with unit testing but the unit testing would not have triggered the performance related issues caused by a high number of active users with large order history data being loaded upon user login.

Using loader.io we are able to create a test that will first hit LoadLogin then LoadCart and then finally LoadCheckout; in each case passing values. Below is a simple screenshot that illustrates this simple test.

Note: Currently it seems like the URL’s have to be provided in reverse order rather than in-order; so look at the URL list bottom up.

Note: Currently it seems like the URL’s have to be provided in reverse order rather than in-order; so look at the URL list bottom up.

This test will make 1500 connections, each connection will make the URL call (in reverse order) once and hold the connection open for 15 seconds, the connection limit can be increased to 50,000 and you can hold each connection for 60 seconds if you like, but if each connection requires 20kb for its session, you will need the appropriate size of RAM (50000*20KB = 976GB).

If you are sending out emails, you will end up with 1500 emails (it may be smarter to disable the emails in the test and just look at the data stored in the DB post order completion for confirming that 1500 orders were placed with he correct data); as you can guess, I did not click on start this test now for 1500, but I did try it with 15 🙂

Why is any of this important?

In my opinion, services like loader.io help you break things quicker; if you can break things quicker, you can fix them quicker. You can also use it to routinely verify that code/releases you put out do not negatively impact performance by automating load testing by integrating loader.io with your build/test scripts through their API.

Everyone should routinely loadtest their unit tests and plan for growth as I learnt the hard way a couple of years ago…..

Using loader.io to test the cloud

When things are going well we often forget about infrastructure, maintenance, scaling and risk; this is especially when your servers are sitting somewhere in the cloud and that they will “scale” somewhat “automatically” when the services detect that your application needs more resources… Unless you have chaos monkey to keep you on your feet, you are going to have to revisit the past yourself once in a while..

I have been meaning to take a look at what we put together for blanklabel back in 2010 only because I know that there is still a lot more work to be done… but preparing load tests that hit various aspects of the infrastructure is time consuming… you have to capture the flow making sure that you hit the web, database, code, bandwidth and cdn resources where each might already be further cloudified and highly available.

I play with shiny toys every now and then, and recently my shiny toy is loader.io; while blanklabel has been using sendgrid for sending its order confirmation emails since 2010 to users (who eagerly await them). So, what did I gain from using loader.io today? Compared to the other day where I had no problems with a 10k hit (vising 1 static URL); today I tried to hit it with 50k hits, 3 heavy urls per connection… and below are the results.

Yes, the test server (I didn’t run this against production) failed at some point and it stopped responding; but its not as simple as that; the infrastructure did not actually fail; the reason why there were timeouts and 500’s were because the worker process got stuck… which means that there is some bad code that can cause a bottleneck before the infrastructure fails, or successfully adjusts resources. Since I had repeated 10k tests a few times before trying a 50k test, its also possible that the cloud admin had already blocked or killed the incoming request which would have impacted my testing… but why leave it open as a risk? n addition to doing a code review, I need to target the workflow correctly; it is quite possible that I did not set things up correctly in the first place.

If you have not checked out loader.io, you should! for me there is a long road that awaits with lots of things to be learnt (and improved).

Creating virtual products on the virtual cloud – Amazon RenderFarm

When we first started talking about what the web based Blank Label experience was going to be like my concern was the visual quality of the product our customers were going to see in the shirt designer. Blank Label allows you to create several thousands of custom shirt design combinations; building a physical product per combination to take a photograph would have been very very very expensive.

Not being able to physically touch the product, and not be able to feel the cloth and not have a good visual of what the product would look like was something were a lot of “not’s” that I was not okay with… so not known anything about 3d modeling nor shirts, I decided to take on the challenge of figuring out how we could come up with a modeling technology that would yield a somewhat realistic shirt… after all, anyone can easily tell a real shirt from a rendered shirt…. right?

The first mistake I made was try to find a company that could create what I needed – there were many; and once I explained the idea behind how I wanted it to work and render, the cost went from $14k to $48k to … over a $100k… yes I know its not cheap to render and design a model, but just because they couldn’t figure out how to automate actions and export clippings (I wont go into details) it did not mean that the client should foot the bill…. anyway., the second mistake I made…. which was the right one… was that I hired a 3d modeler (overseas) to work with me and understand what I was trying to create…. I did not learn much about 3d modeling, but I did learn more than I will every want to about a shirt during this time…. anyway, lets look at what we created back in the day.

Version 1 (2009)

Version 1; wasn’t that bad… but it also wasn’t that good. It did a decent job of showing your fabrics and other selections, but the realism was lost., so fast forward several months we came out with version 2 with a different 3d modeler, and again several months after than version 3 with another modeler., (both below)

Version 2 (2010)

version 3 (2011)

The problem with each (besides it not being realistic enough) was that the modelers themselves did not understand the anatomy of the shirt; and because they didn’t, they couldn’t create a model that behaved like a real shirt. On top of that, they didnt really understand the need and desire behind having things done a certain way because they had never worked on a model that was controlled by code…. at some point we needed to add more features to the shirt, and also, since each modeler we had been through, had a non-automated way of generating renders., it would take weeks if not months to get 10-15 fabrics rendered…. so I went back to the design board, and looked hard…

By now I had some understanding of how the models work, and how things needed to be created., and fortunately for me I found someone who was willing to understand the shirt, and understand the entire process behind what I was trying to do., The best part about this was that he said “I don’t know if i can do this, I have never done this, I don’t know if it will work, but if you trust me and can be patient, I can give it my best shot”

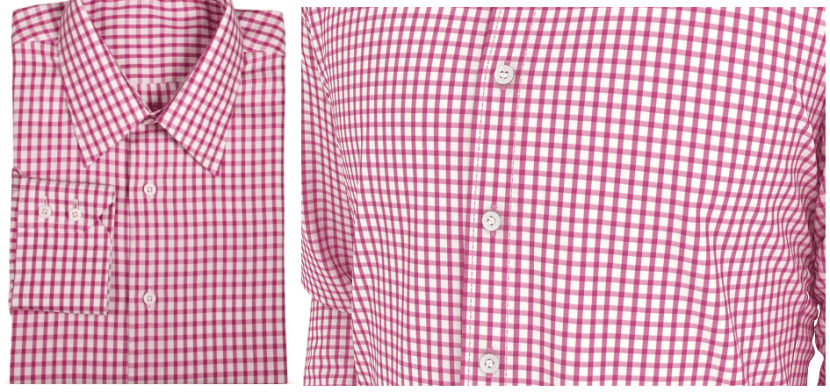

See the image below, they are both zoom shots

In this image, the one on the left is a picture of a real shirt; the one on the right is a rendered zoom shot of the chest area

In this image, the one on the left is a picture of a real shirt; the one on the right is a rendered zoom shot of the chest area

below is version 4 (current: 2012) of the shirt

With version 4; we have achieved enough realism. There was a lot of attention to detail, both he and I understood every single part of the shirt; how its stitched, how the layers go on each other, etc… and the model we have now., is not just realistic-ish., its also automated… if you head over to Blank Label you will see that there are several rendered views/zooms of the shirt.. which finally brings me to the topic of this post.

We use to render the model on his machines, he had a small network of 3 machines, a decent amount of CPU power, and he would spit out 5 fabrics rendered(each is 86 frames) each day; approx 1.5-2 hours per render. Not that bad… but for us, when we launch new fabric selections, we usually launch 30+ at a time; which means 2 weeks to get those rendered and that is if he isn’t busy rendering other stuff….

We looked at render farms that are out there…. way to over priced and you have to hand over your files… your intellectual property to a third party.. who wants to do that? so we thought that we would give Amazon (AWS) cloud a try…. in theory it sounds great, and there are a few articles out there on how to accomplish it, but they are based on theory…

The best part is that I had no idea what I was doing and it was going to be a challenge to figure it out…after going through the motions and having burnt 20 hours; I can confirm that it works, but it takes time and patience.

To build our renderfarm on amazon AWS using only EC2’s; I simply took a windows 2008 r2 AMI and started configuring it, installing the trial version of 3d studio max and setting it up. Once it was setup, we had the issue of “Who would be the manager”… we decided that it made most sense to have the 3d modeler be the manager so we opened up ports through his firewall into his machine, and viola, the render server connected to his machine and was ready to accept work…. there was just one issue., the files on his machine mapped to a UNC path that did not exist on Amazon…. in hindsight, I would have had him fix it a different way, but we ended up mapping everything to drive Z (took several hours to remap) and once remapped, we used google drive to sync between the amazon cloud instance and his machine; once synced, the server started doing work and started spitting out renders….

Great… but that’s just 1 machine though…

Once the first machine worked, it was pretty challenging to setup a repeatable image; i.e. an image that you could just start, and it would automatically connect to the manager and start doing work. Its not as easy as it sounds, there are permissions that need to be setup, things that need to run as a service, virtual folder to drive mappings that need to be created and the hardest of all, ensure that they connect to the manager. 🙂

I will definitely dedicate a post to walk through an amazon render-farm setup; but I will leave you with this.

What use to take 1.5 – 2.0 hours per fabric, now takes 15-20 minutes with 7 virtual machines; up that to 14 machines and your time decreases by 1/2.

This render-farm allows us to create our virtual products much quicker, virtually on the cloud.

The best type of customer

Recently thinking about sales, user experience and friction points for blanklabel.com (blank-label.com) I started thinking about our customers…

And soon I was trying to answer the age old question that plagues many, “What type of a customer qualifies as the best customer?”

I pondered between “does a customer who know what they are looking for the best customer?” or is a customer who I can educate the best customer? Money? Male? Female? Age? Income?….

After getting no where… I decided to analyze, “what type of a customer am I” and then it hit me…

“I am the best customer”!

So what type of a customer am I?

I am an impulse buyer… And impulse buyers, on my opinion are the best and hardest to sell to…

Why?

Well, we have the money and will spend it in a couple of seconds and will think about our decision later… What makes that hard? Well, impulse buyers have an attention spam that goes from “must buy” to “I’ll think about it” to “won’t think about it again” in a few seconds….

Are most buyers of this type? My observations tell me so; but will need to collect some statistics to prove this observation. High bounce rates for sites/pages that cannot successfully funnel a sale in a few seconds (clicks) will validate my claim.

If you have to educate this customer; you’ve probably lost the impulse sale, but may get them at a later time.

So how do you sell to buyers like me?

Convince the user that it’s okay to buy on an impulse because you will happily refund their money if they are not happy will feed into their impulse.

Don’t make me enter a whole lot of info, capture my credit card ASAP.

If I can connect to the details visually; don’t make me read

Do not give me a lot of options, funnel me!

Most buyers don’t know they want to buy something…. Most buyers are browsing the net aimlessly… I certainly am…

If it isn’t

1. That’s cool, I could use that

2. I believe that it’s worth the $

3. Ready to buy

4. Sold

5. Within 1-120 seconds

…..You’ve lost a sale.

Startups – importance of your team

As far as I can recall, I have always been trying to build something.

In high school it was a community magazine. In college it was a BBS (bulletin board system).. Then towards the mid/end of the 90’s it was a subscription based web publication system and an online food court.

Let’s just say it was the wrong county and maybe the wrong time.

But moving forward came the idea of an online community that connected people, services and things based on geolocation…. This is before twitter, facebook, myspace, groupon, etc, etc.

Re-evaluating all my projects; I had realized that it was the “lone-star”ness that should be blamed for the failures.

I decided all my projects eventually failed because I was doing everything and eventually burning out and loosing interest on my own project.

August/September 2009 I decided I was going to have a team for my next venture. Started looking at startup job postings, contacting startups, etc… Wasn’t going anywhere until on twitter I found a post from Fan, looking for a techie to help with blanklabel.com and I reached out as in the early 2000’s another idea I had toyed with was online tailoring…. A year+ blanklabel.com grows, at a rate that far exceeds the expectations of our followers and probably our competition.

At home, many times I use to find an abandoned coffee cup; forgotten, unfinished and left in place. Credit goes to the now wife… I would ask her “what the hell is this?” and her response would be “a chemistry experiment”.., hence my motivation for making the following analogy…

A team is like the tube in a particle reactor and the particles are the team members.., you could state it’s an experiment where the tube contains the particles and helps them move towards the desired direction. Why is it an experiment? Well, you hope that the constant colliding will produce the energy/effect desired and not blow up the entire reactor. So it’s also important that these particles mix well.

Without this tube and other particles, I’d still be a free radical… A lone star that would eventually burn out and have no potential of creating and being part of a “big bang”.