Tagged: metrics

Maturing from a StartUp to a StartedUp culture – Series Part 3

Several years ago I had a Volvo (88 760 GLE) and one day I noticed little streams of smoke from under the hood every time I would get back home; I had little experience with cars back then so I took it to a friend’s dads shop. I should have probably left when I got there because there was a customer yelling at him for messing up his beetle and charging him extra to fix it; apparently he put some hoses on wrong and then had to redo the work, I wasn’t there for the whole story, just the last 20 minutes of it and then the customer drove off.

My friend’s dad asked me to start my car and pull up next to him and leave the car running. He popped the hood and started to look around, he checked the hoses, looked at the pump, lines, drove it around, several hours passed by… he went from suggesting that there was coolant leak, to transmission leak to radiator oil leak… several more hours passed by as he came up with theories and looking at things… after being there for about 6 hours I decided to stick my head in and look at the engine block near the side where I told him to look and thought the smoke was coming from. Sure enough, just as I looked, I saw bubbles near the engine block’s cover, pointed it out to him and he said “ah, it’s loose! oil is getting out”; brought over his tools, tightened it and the problem solved.

I had been there for 7 hours, he wasn’t the type of guy who would say “this is my son’s friend, I am going to help him out”; he was a business man and to him I was a customer. I was upset with him for wasting 7 hours of my time; but what was really on my mind at that time was “how much will these 7 hours cost me?”, especially since he had a sign posted that had “Service hour rate: $45/hr” in big letters…. I will get back to this later in the post.

KPI and metrics

Man hours, hours, T-shirt size, story points, etc are measurements. I will try my best to not go down a rabbit hole with scrum, story point’s vs hour estimates… I will not! and hopefully I won’t lose my original messaging in all of this. Let’s start with this; at some point or the other, the focus and bottom line for a company will be “shipping product” against a “delivery schedule”. People, process, culture, story points, hour estimates, etc. will eventually stop existing if the “startedup” company cannot ship product and closes down (the focus here is shipping product according to other peoples expectations, i.e investors, C-suite, etc). With this at the back of our minds let’s continue on.

Story points are a measure of risk and/or effort and/or complexity (the and/or is there for the ones who disagree that story points do not measure complexity and/or risk).

Work/Task estimates are hours (usually) it takes to complete a task (with risk, complexity and effort already factored in).

Some argue that story points are just a block of time that provide the developers with padding; some argue that story points and hour estimates are not the same; some argue that time estimates need to be detailed and you should only use blocks of time (i.e. story points). I am not here to argue about any of these.

If you look at what part story points and sprints play: Story points (representing stories) go into sprints and sprints are boxed in time; at the end of the day, we are basically fighting for time. Others (Non-developers) usually want to know “how long will it take”, “when will it be done” because they need to set schedules, communicate to others, but (most) developers just want to work 🙂

How can I tell you how long it will take to fix when I have not even looked at the code; code that someone else wrote years ago!

When you have your team of 10 who have been working on a project (or two); the story points, velocity charts and estimations work out great. The team of 10 will negotiate points and the best suited person will do the work; everyone starts getting a good idea of what others and they themselves can do with improved accuracy (and velocity).

What risk can come up when you throw in 65 new hires and 2 new projects?

One thing that can happen is that the wrong person can get the wrong story; it is also possible that the new team may incorrectly estimate story points.

This happens or can happen because it takes experience and familiarity to get both (story point estimates and story assignment) of these right. Let’s say everyone is working on their tasks for a sprint, there is 1 story (something to do with SSL) remaining and it MUST make the sprint (which closes sooner than the story can be worked); the one developer available knows NOTHING about SSL, and a simple change measured as 2 story point remains, there is a developer on the team who knows about SSL but she is already working on a different feature that requires her knowledge on encryption.

Why did this get set as 2 story point when it was obvious to the team that there was risk? Rather than negotiate for 5 points the team settled for 2 because they expected the more senior programmer to have a better idea of how many story points it would take; the senior programmer saw no problem with 2, because in the past, her team was comfortable with it being 2. Repeat this several times, and you have a pattern; how do you break this pattern? or how would you even recognize this pattern? How would someone have suggested 5? How do you further refine estimating story points so that its not just based on gut, experience or familiarity? You start building and tracking additional KPI’s/metrics. Velocity and Burn Up/Down charts are common KPI’s that most use, you need more to help fish out patterns and gaps.

I think it’s important to acknowledge that in a true scrum setup (a perfect world, which is possible) these things may not happen (or happen rarely); if something doesn’t make a sprint, it moves to the next, but in most (all) of the places I have worked at, true scrums do not exist, shit happens and you cannot NOT make the dates; unless the team pulls together, works OT and possibly burns out (if it keeps happening).

As many others do, I like to base my estimation on experience and collectively agree with a team; but wouldn’t it be easier if there was another set of metrics that provided extra assurance or a reality check? i.e. historical data. Either metrics against tasks or metrics against similar stories. i.e. a story around “user login” averages to be a 4 point story based on previous similar stories; a task to “check credentials against db” takes 2 hours? The metrics can be captured after sprints/projects are done in adhoc meetings or release review meetings the data would be used for new hires, for times when things are under/over estimated. New hires and others could use this data to help estimate and understand gaps between what it takes on average and how the teams perform; the KPI’s would further detail teams health…

Going back to the Vovlo; I was waiting at his desk while he put his tools away, then he walked back to his desk, opened up a book, flipped pages to a section that read “Diagnostics”, found a line item for “Oil Leak” and sub-item “Gasket”, and said “2 hours, so you owe me $90 for fixing the problem”. Even though he spent 7 hours on it, he charged me for 2 because that’s what the book that had metrics for that type of service said it should take.

The Volvo example is important to me because it identifies performance issues; i.e. he should have done it in less than 2 hours if he was a good mechanic because that’s how long it takes on average; he should be asking himself “why did it take me more than 3 times as long and how can I do this better” because that’s what we would use similar development metrics for. “I seem to always under estimate UX changes, I need to pay better attention”.

The example I used has so far revolved around a startup company of 10 growing to a startedup company of 65; let me use a different example: A software development boutique is agile and they have client projects captured in back to back sprints. There are account executives that double as product owners who talk to the customers and based on experience and some dialogue with a few dev leads, they estimate effort and agree to a schedule and budget. Once they are ready to start the sprint (for a new project) the dev leads will update the team and as soon as sprint planning (stories placed) is done they start rolling.

A few issues:

- The dev lead and account executive time-boxed the maximum amount of time it can take based on their meetings with the customer; there was no team review

- Account executives double as product owners; their stories aren’t reviewed by developers until the work actually starts since developers are already busy on other projects

- There is no room for scope creep; things cannot get thrown out since this is a client project, and it must meet a date

- When there is scope creep and because its boxed; resources will work over time and burn out since the cycle just repeats it self – regardless of what your story points are, they have to fit in the sprints.

You could point out that the issue here is that there isn’t team involvement with the original estimation (for the time box) but this is because of how the company chooses to operate, so you cannot change this. You could state that the issue here is that there will always be some sort of scope creep so you cannot expect a hard stop but this is also because of how the company caters to customers expectations and needs to operate.

I would argue that the issue here is that the account executives do not have a “rate book” or “performance history” for similar tasks/stories that can help them come up with better estimates and factor in complexity when needed. In addition to that, since this company is in a pattern of running over (and solving by having people work over time, every time) there should be some sort of analysis done after each project to come up with “mistakes made” or “lessons learnt” so that people can learn from the patterns and put out better estimates; there will be times where you cannot change the entire companies culture, so instead you need to look for what can be improved.

With focus on additional KPI and metrics; one can identify issues with process or gaps before they become a bigger problem; Don’t just stick with how things worked when you were a startup and expect things to continue to always be perfect once you start growing, when you are “startedup” you need to start looking at adding new KPI’s and measurements that will help the bigger team work better and scale.

Building high performing teams by showing your employees how they can do better

This topic builds upon the previous “Building high performing teams by telling your employees they suck” (its not as simple as telling someone “you suck”); the emphasis really comes down to communication (showing) and attributes (why) they are not performing.

I learnt very quickly in my career that quality metrics do not lie and can easily convince to win an argument. I also learnt that pictures and diagrams help me explain (and understand) things much quicker than words alone. This is why, whenever I have had to prove something to myself, or explain a point; I have turned to metrics and diagrams.

So lets first cover some basics

Many companies do an “annual review” dance; some do it twice a year, some don’t even do it (we wont talk about them)… and during this review, the expectation or assumption is that the person being reviewed has been working towards their goals that were defined the year prior; or that if goals had changed, then things would have been appropriately documented to reflect that change…. Truth is.. we do not have time for this; especially when you are in a high demand team where priorities change on you all the time. However; if you have been actively carrying out your 1-on-1‘s and have been using it to develop the person/your team and have some sort of career path or individual development plan in place; you may be well prepared for this annual review – Great for you!

Some lucky ones get an annual, semi-annual (or sooner) 360 review; I believe this provides more value than an annual review because it lets you hook into career development much sooner and/or it will give you insights to how you efforts are perceived by a larger audience who may be working with you on much closer day-to-day basis; this can one stay on top of their game if its done in short enough period as it is a great motivator. Managers can then review the trend over time and see how one is progressing to give a more accurate review.

At the end of the day, with all these reviews, coaching, development plans, etc. what we are really trying to do is grow people (or remove the ones that wont grow), make sure they perform and build teams that work well.

High-performing teams don’t just happen, they are made.

It is my opinion that in order to build high performing teams that work well; you have to instil a culture around “working well”. The annual, semi-annual, etc reviews really need to tie into a day-to-day type of thing; it may be seen as a lot of work (initially true), but just like good and frequent maintenance goes a long way, so does this.

To recap; what I have just stated is that we should focus on reviewing everyone on more frequent basis; something that ties into the day-to-day and can be captured daily or weekly… or best, if it can be captured adhoc as long as its captured within some frequent frequency. We would of course want this to be documentable, repeatable, measurable and actionable… we would want a process.

So, if this all has not added up yet, we want quality metrics that will provide diagrams/results based on process that can be used to communicate performance.

Every couple of weeks; I will ask my leads “without any thought into this, respond to my email and stack-rank your team”; or I will ask “If you had to do a project, who are the 3 people, in order of preference, you would pick”. Then there will be the “who is the most active volunteer” or “who is the least to volunteer”… While these questions lead to answers that I plugin to my brain somewhere and maintain on spread sheets… they do not translate to quality metrics because they are not standardized enough… nor really repeatable.. (and probably other reasons as well). Another flaw with my method of capturing metrics is that it doesn’t allow “everyone”offer an opinion; only the ones I happen to ask or interact with that day. So while I may be able to “wing-it” and come up with diagrams based on some metrics… we need to focus on improving the quality of these metrics.

Quality Metrics

What does “team work” mean to you? What are the attributes of a team player? What type of people do you want to work with? or what type of people do you want working for you?

Here are some interesting data points that one can use a 1-5 scale to rate someone on (in no particular order)

- Reliability

- Constructive communicator

- Active listener

- Problem solver

- Active participant

- Shares solution/ideas

- Volunteers assistance

- Flexible

- Customer focused

- Respectful

In addition to defining the data points; you want to make sure that everyone has an opportunity to provide this data on “any one” without a need for self-identification (remain anonymous) and you will want some way of reminding people to go back and update (or resubmit) data at some frequency.

Creating a process around capturing these metrics and emphasizing the need to submit feedback sends a strong message to your team(s) that this is important.

Communication

Once you have reviewed, tweaked and confirmed (adhoc charts) that you are capturing data that is meaningful; its time your shared the results with the team (where appropriate) and with individuals (as needed).

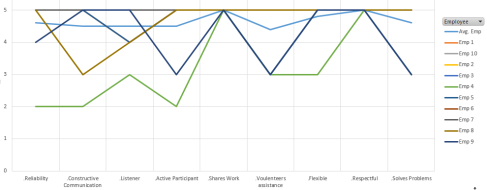

The diagram below is probably more for your own viewing (rather than sharing with the team)

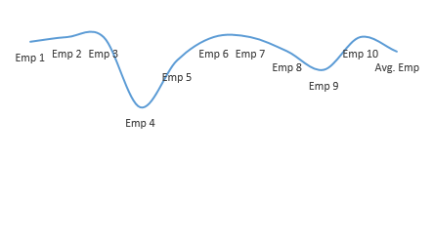

This diagram identifies the low and high scorers (averages of combined attributes); and you can see from above, Employee 4 is a low scorer.. (bottom of stack rank)

This diagram identifies the low and high scorers (averages of combined attributes); and you can see from above, Employee 4 is a low scorer.. (bottom of stack rank)

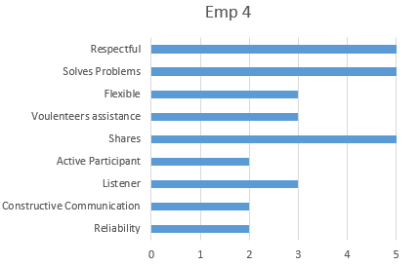

You may want to dive deeper into this, and you will see

Where everyone lies based on attribute; is there a deviation for anyone? or does everyone score low in a specific category?

Again, from the above two it seems like Employee 4 isn’t at par with the rest.

Communicate

Communicate how/why/where they are not performing… a picture says a lot more than words, see below:

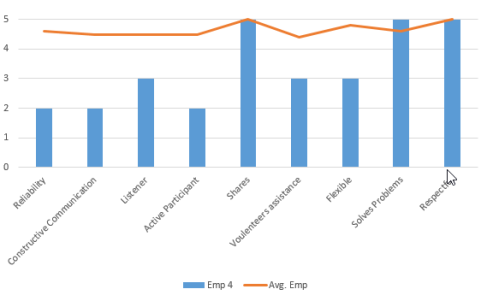

This chart can be used in a 1-on-1 with Employee 4 to go over their specific performance; and can be tied in with what the “average employee” scores (which is just avg score per attribute), see image below

This image that compares employee 4 to the average employee gives the employee actionable data that is backed by metrics. They can then use this information to focus improvement efforts.

Conclusion

So while its easy to focus on the literal words used. The goal should never be to tell someone that they suck (and stop at that); it should be to help drive change, development and improvement in someone by clearly communicating areas they need to focus on so that you can build a high performing team.